Mitsuba 2: A Retargetable Forward and Inverse Renderer

http://rgl.epfl.ch/publications/NimierD ... 19Mitsuba2

MIS Compensation: Optimizing Sampling Techniques in Multiple Importance Sampling

https://cgg.mff.cuni.cz/~jaroslav/paper ... index.html

CGI tech news box

Re: CGI tech news box

Selectively Metropolised Monte Carlo light transport simulation

AbstractFor this indoor scene lit by a sun/sky environment emitter, path tracing resolves most of the lighting well, but introduces high variance in the reflective caustic cast by the mirror. ERPT and RJMLT resolve the caustic better, but do not handle diffuse lighting well and introduce noise and temporal flickering. Our method uses MLT to only resolve the difficult paths using bidirectional path tracing, and handles everything else with path tracing, leading to significantly improved MSE (relative MSE shown in insets) and vastly reduced temporal flickering. Scene ©SlykDrako.

Light transport is a complex problem with many solutions. Practitioners are now faced with the difficult task of choosing which rendering algorithm to use for any given scene. Simple Monte Carlo methods, such as path tracing, work well for the majority of lighting scenarios, but introduce excessive variance when they encounter transport they cannot sample (such as caustics). More sophisticated rendering algorithms, such as bidirectional path tracing, handle a larger class of light transport robustly, but have a high computational overhead that makes them inefficient for scenes that are not dominated by difficult transport. The underlying problem is that rendering algorithms can only be executed indiscriminately on all transport, even though they may only offer improvement for a subset of paths. In this paper, we introduce a new scheme for selectively combining different Monte Carlo rendering algorithms. We use a simple transport method (e.g. path tracing) as the base, and treat high variance “fireflies” as seeds for a Markov chain that locally uses a Metropolised version of a more sophisticated transport method for exploration, removing the firefly in an unbiased manner. We use a weighting scheme inspired by multiple importance sampling to partition the integrand into regions the base method can sample well and those it cannot, and only use Metropolis for the latter. This constrains the Markov chain to paths where it offers improvement, and keeps it away from regions already handled well by the base estimator. Combined with stratified initialization, short chain lengths and careful allocation of samples, this vastly reduces non-uniform noise and temporal flickering artifacts normally encountered with a global application of Metropolis methods. Through careful design choices, we ensure our algorithm never performs much worse than the base estimator alone, and usually performs significantly better, thereby reducing the need to experiment with different algorithms for each scene.

Adaptive Environment Sampling on CPU and GPU

Adaptive Environment Sampling on CPU and GPU

Yet still, portals don't produce splotches and flickering.

// V-ray Next implements the method as described in the paper (supplemental, slides.pdf, slide notes)

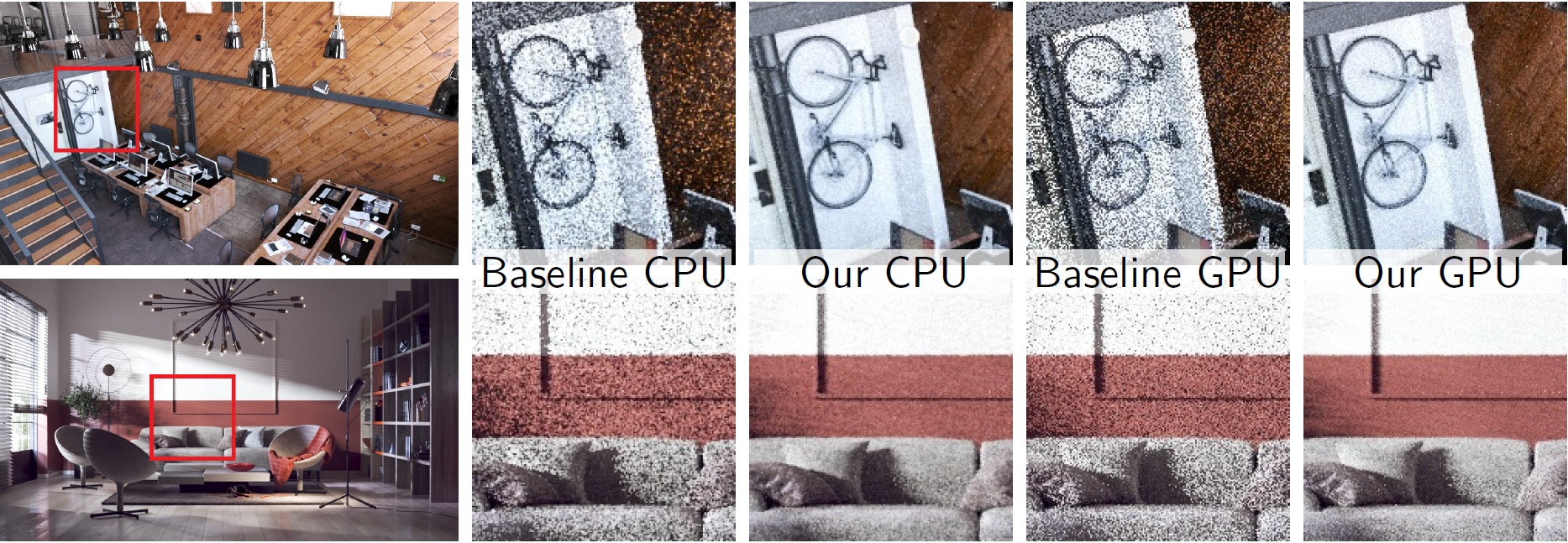

"Office" and "Living room" scenes rendered with classical environment sampling (Baseline) and our adaptive strategy. We present both CPU and GPU implementation results and show that our algorithm produces much cleaner images in the same time. The effective speedup, measured as the time to achieve the same noise level, for CPU/GPU implementations is, respectively: "Office" - 6.6/3.8 and "Living room" - 2.7/2.4. "Office" scene courtesy of Evermotion.

Abstract

We present a production-ready approach for efficient environment light sampling which takes visibility into account. During a brief learning phase we cache visibility information in the camera space. The cache is then used to adapt the environment sampling strategy during the final rendering. Unlike existing approaches that account for visibility, our algorithm uses a small amount of memory, provides a lightweight sampling procedure that benefits even unoccluded scenes and, importantly, requires no additional artist care, such as manual setting of portals or other scene-specific adjustments. The technique is unbiased, simple to implement and integrate into a render engine. Its modest memory requirements and simplicity enable efficient CPU and GPU implementations that significantly improve the render times, especially in complex production scenes.

Yet still, portals don't produce splotches and flickering.

Re: Adaptive Environment Sampling on CPU and GPU

Ohoh, their solution, when compare to LuxCore Env. Light Visibility Cache, is a classic trade off between memory usage and quality: ELVC requires a LOT more memory/pre-processing to works well but it deliver better results (if you use enough memory/pre-processing). Otherwise the 2 solutions are quite similar.

However the good news is this paper has the piece of the puzzle I was missing for ELVC: the last step. I'm using an env. light visibility map (i.e. a pixel image). The ELVC problem is you need very high resolution maps to work well. This cost both a LOT of memory and pre-processing time: more pixels, more shadow rays to trace.

This is 1 level hierarchy solution. If I use 2 levels hierarchy where one map pixel point to an env. light tile and I sample the tile according the usual light intensity (i.e. classic importance sampling), I can use visibility maps that are a loooot smaller and so also a loooot faster to build.

Now, it is optimal:

- one visibility map pixel => one HDR pixel

While, for instance, with tiles:

- one visibility map pixel => one HDR 8x8 tile (64 times less pixels to stores and 64 times faster pre-processing !!!!!!) => a HDR tile pixel picked according importance sampling

I ... need ... to ... write this code ....

Re: Adaptive Environment Sampling on CPU and GPU

Hopefully this also means the region/border rendering will work much better tooDade wrote: Mon Oct 14, 2019 11:13 pmOhoh, their solution, when compare to LuxCore Env. Light Visibility Cache, is a classic trade off between memory usage and quality: ELVC requires a LOT more memory/pre-processing to works well but it deliver better results (if you use enough memory/pre-processing). Otherwise the 2 solutions are quite similar.

However the good news is this paper has the piece of the puzzle I was missing for ELVC: the last step. I'm using an env. light visibility map (i.e. a pixel image). The ELVC problem is you need very high resolution maps to work well. This cost both a LOT of memory and pre-processing time: more pixels, more shadow rays to trace.

This is 1 level hierarchy solution. If I use 2 levels hierarchy where one map pixel point to an env. light tile and I sample the tile according the usual light intensity (i.e. classic importance sampling), I can use visibility maps that are a loooot smaller and so also a loooot faster to build.

Now, it is optimal:

- one visibility map pixel => one HDR pixel

While, for instance, with tiles:

- one visibility map pixel => one HDR 8x8 tile (64 times less pixels to stores and 64 times faster pre-processing !!!!!!) => a HDR tile pixel picked according importance sampling

I ... need ... to ... write this code ....

Re: Adaptive Environment Sampling on CPU and GPU

It is not related. It has a different explanation and an easy workaround.lacilaci wrote: Tue Oct 15, 2019 4:29 am Hopefully this also means the region/border rendering will work much better too

Re: CGI tech news box

Just want to be a little mouse sitting behind your computer screenI ... need ... to ... write this code ....

Re: CGI tech news box

Yes, it is on me (or anyone else who could work on the Blender addon) to fix this in the addon by using LuxCore's subregion.

-

epilectrolytics

- Donor

- Posts: 818

- Joined: Thu Oct 04, 2018 6:06 am

Re: Adaptive Environment Sampling on CPU and GPU

It sounds like this does not only help with direct light like ELVC but indirect light also?kintuX wrote: Mon Oct 14, 2019 9:49 pm Adaptive Environment Sampling on CPU and GPUA fixed number of camera paths are traced in the scene and for each path vertex

– whether due to a primary ray or a secondary, GI ray –

we determine its corresponding grid cell by projecting the vertex back to the camera center.

Would this work with BiDir(VM) too?

(Looks like a simple way of path guiding (MIS) to me.)